#Webmon mount nfs folder install#

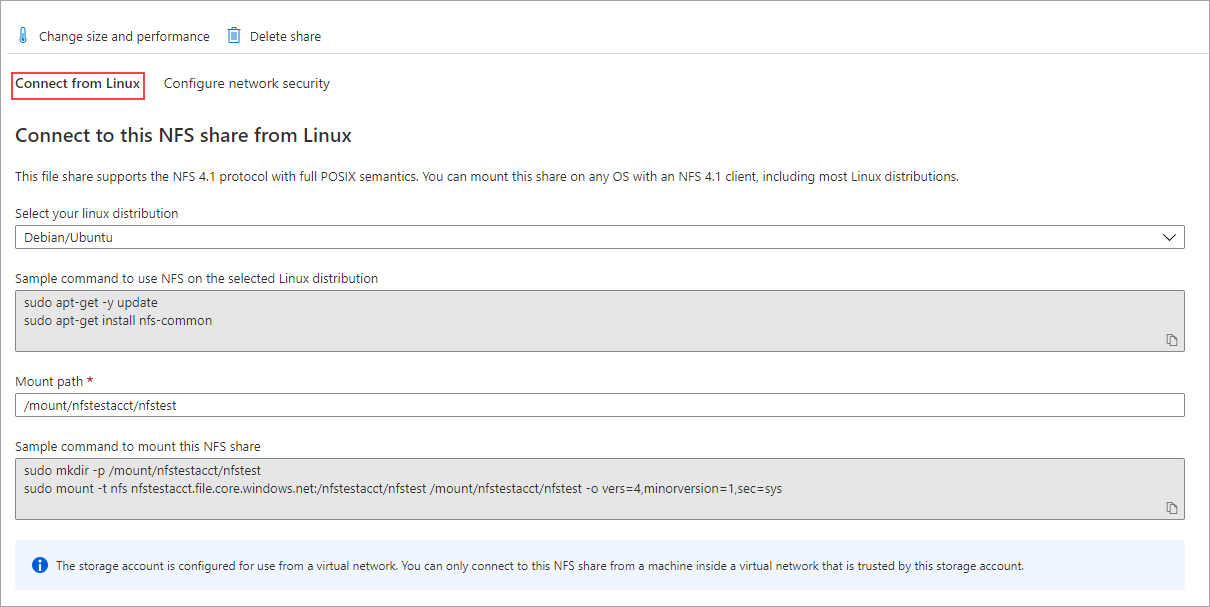

At that point, I manually connect to our VPN so that the provisioner can continue to pull in the needed during GuestOS yum install of our internally managed RPM packages. MOUNTOPS: additional options to the mount command (e.g. MOUNTPOINT: folder where you want to mount the NFS filesystem inside the Docker Container, The folder should exists. Where 10.10.0.10 is the IP address of the NFS server, /backup is the directory that the server is exporting and /var/backups is the local mount point. To get around the above, I disconnect from our VPN, run vagrant up till NFS mount succeeds. FOLDER: Optional, folder inside the NFS export that you want to mount as the root folder. Mount the NFS share by running the following command as root or user with sudo privileges: sudo mount -t nfs 10.10.0.10:/backups /var/backups. However, I can obviously no longer access our office RPM repo and that caused the provisioner to fail, which our provisioning script is written to point our CentOS7 GuestOS to an internal RPM repo. Refer to the following table to validate the synthetic monitor status. When I switched of/ disconnect from my VPN, the vagrant NFS mount worked (repeatedly tested using vagrant ver 1.9.2, 1.9.8 and 2.0.3 against Virtualbox 5.1.34). Linux commands to validate synthetic monitors. If the F option is omitted, mount takes the file system type from /etc/vfstab.

If the resource is listed in the /etc/vfstab file, the command line can specify either resource or mountpoint, and mount consults /etc/vfstab for more information. I tried to run vagrant up via VPN in order to access our RPM repo in our office network, at home, and the obvious routing table changes and new NIC routing interfaces created altered the behaviour of vagrant host to guest networking. mountnfs starts the lockd (8) and statd (8) daemons if they are not already running. I confirmed VPN is the cause of nfs mount synced_folders issue.